The Ironclad project at Microsoft Research is using a set of new and modified tools based on automated theorem proving to build Ironclad services. An Ironclad service guarantees to remote parties that every CPU instruction the service executes adheres to a high-level specification, convincing clients that the service will be worthy of their trust. To provide such end-to-end guarantees, we built a full stack of verified software. That software includes a verified kernel; verified drivers; verified system and cryptography libraries including SHA, HMAC, and RSA; and four Ironclad Apps. As a concrete example, our Ironclad database provably provides differential privacy to its data contributors. In other words, if a client encrypts her personal data with the database’s public key, then it can only be decrypted by software that guarantees, down to the assembly level, that it preserves differential privacy when releasing aggregate statistics about the data.

We’ve also recently expanded the scope of our verification efforts to distributed systems, which are notorious for harboring subtle bugs. We have developed IronFleet, a methodology for building practical and provably correct distributed systems. We demonstrated the methodology on a complex implementation of a Paxos-based replicated state machine library and a lease-based sharded key-value store. We proved that each obeys a concise safety specification, as well as desirable liveness requirements. Each implementation achieves performance competitive with a reference system.

In this talk, we describe our methodology, formal results, and lessons we learned from building large stacks of verified systems software. In pushing automated verification tools to new scales (over 70K lines of code and proof so far), our team has both benefited from automated verification techniques and uncovered new challenges in using them.

By continuing to push verification tools to larger and more complex systems, Ironclad ultimately aims to raise the standard for security- and reliability-critical systems from “tested” to “correct”.

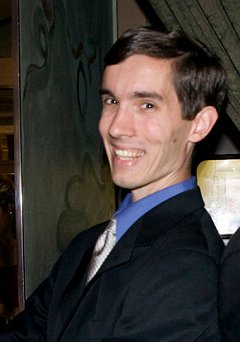

I joined the Security and Privacy Research Group here at Microsoft Research in 2010. I am interested in a broad range of security topics (e.g., network and system security, applied cryptography, usable security, and data privacy), as well as topics such as operating system design, distributed systems, and mobile computing. My current work focuses on protocols for verifiable computation and zero-knowledge proofs, building practical, formally verified secure systems, and developing next-generation application models. I have been fortunate to work with many excellent interns, including Ben Kreuter, Karthik Nagaraj, Mariana Raykova, Joshua Schiffman, Srinath Setty, Sai Deep Tetali, Xi Xiong, and Samee Zahur.

I completed my PhD at Carnegie Mellon University under the supervision of Adrian Perrig. My dissertation studies the design, implementation, and evaluation of a combination of hardware, software, and cryptographic primitives for extending the trust you have in one service or device in order to allow you to trust other services and devices. My dissertation won the 2010 ACM Doctoral Dissertation Award.

Earlier in my career, I studied computer science at Harvard University.

Mon 18 JanDisplayed time zone: Guadalajara, Mexico City, Monterrey change

09:00 - 10:00 | |||

09:00 60mTalk | Ironclad - Full Verification of Complex Systems VMCAI Bryan Parno Microsoft Research | ||