Main TrackACSOS 2021

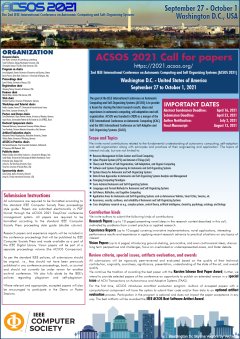

The goal of the IEEE International Conference on Autonomic Computing and Self-Organizing Systems (ACSOS) is to provide a forum for sharing the latest research results, ideas and experiences in autonomic computing, self-adaptation and self-organization. ACSOS was founded in 2020 as a merger of the IEEE International Conference on Autonomic Computing (ICAC) and the IEEE International Conference on Self-Adaptive and Self-Organizing Systems (SASO).

Emerging large-scale systems (including data centers, cloud computing, smart cities, cyber-physical systems, sensor networks, and embedded or pervasive environments) are becoming increasingly complex, heterogeneous, and difficult to manage. The challenges of designing, controlling, managing, monitoring, and evolving such complex systems in a principled way led the scientific community to look for inspiration in diverse fields, such as biology, biochemistry, physics, complex systems, control theory, artificial intelligence, and sociology. To address these challenges novel modeling and engineering techniques are needed that help to understand how local behavior and global behavior relate to each other. Such models and practices are a key condition for understanding, controlling, and designing the emergent behavior in autonomic and self-adaptive systems.

The mission of ACSOS is to provide an interdisciplinary forum for researchers and industry practitioners to address these challenges to make resources, applications, and systems more autonomic, self-adaptive, and self-organizing. ACSOS provides a venue to share and present their experiences, discuss challenges, and report state-of-the-art and in-progress research. The conference program will include technical research papers, in-practice experience reports, vision papers, posters, demos, and a doctoral symposium.

Tue 28 SepDisplayed time zone: Eastern Time (US & Canada) change

09:45 - 10:00 | |||

09:45 15m | Opening / Welcome Message Main Track Jean Botev University of Luxembourg, Tarek El-Ghazawi George Washington University, Christopher Stewart The Ohio State University, USA | ||

10:00 - 11:15 | |||

10:00 75mKeynote | Autonomic Stream Processing at Scale Main Track Dilma Da Silva Texas A&M | ||

11:45 - 12:50 | Theory and Practice of Self-* SystemsMain Track at AUDITORIUM 1 Chair(s): Ada Diaconescu LTCI Lab, Telecom Paris, Institute Politechnqie de Paris | ||

11:45 25mPaper | Stochastic Switching of Power Levels can Accelerate Self-Organized Synchronization in Wireless Networks with Interference Main Track Jorge Schmidt Alpen-Adria-Universität Klagenfurt, Udo Schilcher Alpen-Adria-Universität Klagenfurt, Arke Vogell Alpen-Adria-Universität Klagenfurt, Christian Bettstetter Alpen-Adria-Universität Klagenfurt Pre-print | ||

12:10 25mPaper | Swarmalators with Stochastic Coupling and MemoryKarsten Schwan Best Paper Award Main Track Udo Schilcher Alpen-Adria-Universität Klagenfurt, Jorge Schmidt Alpen-Adria-Universität Klagenfurt, Arke Vogell Alpen-Adria-Universität Klagenfurt, Christian Bettstetter Alpen-Adria-Universität Klagenfurt Pre-print | ||

12:35 15mShort-paper | Evolving Neuromodulated Controllers in Variable Environments Main Track Chloe Barnes Aston University, Anikó Ekárt Aston University, Birmingham, UK, Kai Olav Ellefsen University of Oslo, Kyrre Glette University of Oslo, Peter Lewis Ontario Tech University, Jim Tørresen University of Oslo | ||

Wed 29 SepDisplayed time zone: Eastern Time (US & Canada) change

10:00 - 11:15 | Keynote 2Main Track at AUDITORIUM 1 Chair(s): Tarek El-Ghazawi George Washington University, Miaoqing Huang University of Arkansas | ||

10:00 75mKeynote | Advancing Science at Speed and Scale: Innovation, Translation & Advanced Cyberinfrastructure Main Track Manish Parashar NSF & University of Utah | ||

11:45 - 12:50 | Resource Management in Data Centers and Cloud Computing IMain Track at AUDITORIUM 1 Chair(s): Vana Kalogeraki Athens University of Economics and Business, Samuel Kounev University of Würzburg, Germany | ||

11:45 25mPaper | FaaSRank: Learning to Schedule Functions in Serverless Platforms Main Track Hanfei Yu University of Washington, Tacoma, Athirai Irissappane University of Washington, Tacoma, Hao Wang Louisiana State University, USA, Wes Loyd University of Washington, Tacoma | ||

12:10 25mPaper | Many Models at the Edge: Characterizing and Improving Deep Inference via Model-Level Caching Main Track Samuel Odgen Worcester Polytechnic Institute, Guin Gilman Worcester Polytechnic Institute, Robert Walls Worcester Polytechnic Institute, Tian Guo Worcester Polytechnic Institute | ||

12:35 15mShort-paper | Empirical Characterization of User Reports About Cloud Failures Main Track Sacheendra Talluri Vrije Universiteit Amsterdam, Netherlands, Leon Overweel Dexter Energy, Laurens Versluis Vrije Universiteit Amsterdam, Animesh Trivedi Vrije Universiteit Amsterdam, Alexandru Iosup Vrije Universiteit Amsterdam | ||

11:45 - 12:50 | Cross-disciplinary researchMain Track at AUDITORIUM 2 Chair(s): Alessandro Vittorio Papadopoulos Mälardalen University | ||

11:45 25mPaper | Timing configurations affect the macro-properties of multi-scale feedback systems Main Track Patricia Mellodge University of Hartford, Ada Diaconescu LTCI Lab, Telecom Paris, Institute Politechnqie de Paris, Louisa Jane Di Felice Universidad Autónoma de Barcelona | ||

12:10 25mPaper | Causal Inference Techniques for Microservice Performance Diagnosis: Evaluation and Guiding Recommendations Main Track Li Wu Elastisys AB/Technische Universität Berlin, Johan Tordsson Elastisys AB, Erik Elmroth Elastisys AB/Umea University, Odej Kao Technische Universität Berlin | ||

12:35 15mShort-paper | A Meta Reinforcement Learning-based Approach for Self-Adaptive System Main Track Mingyue Zhang Peking University, China, Jialong Li Waseda University, Japan, Haiyan Zhao Peking University, Kenji Tei Waseda University / National Institute of Informatics, Japan, Shinichi Honiden Waseda University / National Institute of Informatics, Japan, Zhi Jin Peking University | ||

13:00 - 14:30 | Software and Systems Engineering for Autonomic and Self-Organizing SystemsMain Track at AUDITORIUM 1 Chair(s): Aniruddha Gokhale Vanderbilt University | ||

13:00 25mPaper | LOS: Local-Optimistic Scheduling of Periodic Model Training For Anomaly Detection on Sensor Data Streams in Meshed Edge Networks Main Track Sören Becker TU Berlin, Florian Schmidt TU Berlin, Lauritz Thamsen TU Berlin, Ana Juan Ferrer Universitat Oberta de Catalunya, Odej Kao Technische Universität Berlin | ||

13:25 25mPaper | On Adapting SNMP as Communication Protocol in Distributed Control Loops for Self-adaptive Systems Main Track Ilja Shmelkin Technische Universität Dresden, Germany, Thomas Springer Technical University of Dresden | ||

13:50 25mPaper | Towards Highly Automated Machine-Learning-Empowered Monitoring of Motor Test Stands Main Track Diego Botache University of Kassel, Florian Bethke University of Kassel, Martin Hardieck University of Kassel, Maarten Bieshaar University of Kassel, Ludwig Brabetz University of Kassel, Mohamed Ayeb University of Kassel, Peter Zipf University of Kassel, Bernhard Sick University of Kassel | ||

14:15 15mShort-paper | Engineering Adaptive Authentication Main Track Alzubair Hassan University College Dublin, Bashar Nuseibeh The Open University (UK) & Lero (Ireland), Liliana Pasquale University College Dublin & Lero | ||

13:00 - 14:20 | Self-organization and Autonomic Computing for Cyber-Physical SystemsMain Track at AUDITORIUM 2 Chair(s): Vinod Muthusamy IBM T.J. Watson Research | ||

13:00 25mPaper | A Self-Adaptive Load Balancing Approach for Software-Defined Networks in IoT Main Track Ziran Min Vanderbilt University, Hongyang Sun University of Kansas, Shunxing Bao Vanderbilt University, Aniruddha Gokhale Vanderbilt University, Swapna Gokhale University of Connecticut | ||

13:25 25mPaper | To do or not to do: finding causal relations in smart homes Main Track Kanvaly Fadiga Ecole Polytechnique, Ada Diaconescu LTCI Lab, Telecom Paris, Institute Politechnqie de Paris, Jean-Louis Dessalles LTCI Lab, Telecom ParisTech, Université Paris-Saclay, Étienne Houzé Télécom Paris Pre-print | ||

13:50 15mShort-paper | Self-organized Allocation of Dependent Tasks in Industrial Applications Main Track Ketong Zheng Technische Universität Dresden, Germany, Eva Julia Schmitt Technical University of Dresden, Arturo González Rodríguez Technical University of Dresden, Gerhard Fettweis Technical University of Dresden | ||

14:05 15mShort-paper | A Framework for Self-Explaining Systems in the Context of Intensive Care Main Track Börge Kordts University of Lübeck, Jan Patrick Kopetz University of Lübeck, Andreas Schader University of Lübeck | ||

Thu 30 SepDisplayed time zone: Eastern Time (US & Canada) change

10:00 - 11:15 | |||

10:00 75mKeynote | Aggregation on an Artificial Patchy Environment Main Track Jean-Louis Deneubourg Free University of Brussels | ||

11:45 - 12:40 | Resource Management in Data Centers and Cloud Computing IIMain Track at AUDITORIUM 1 Chair(s): Partha Pal Raytheon BBN Technologies | ||

11:45 15mShort-paper | AHA: Adaptive Hadoop in Ad-hoc Cloud Environments Main Track Ryan Liu University of Waterloo, Canada, Shizhe Lin University of Waterloo, Canada, Ladan Tahvildari University of Waterloo | ||

12:00 15mShort-paper | Architecture-based Evaluation of Scaling Policies for Cloud Applications Main Track Floriment Klinaku University of Stuttgart, Alireza Hakamian University of Stuttgart, Steffen Becker University of Stuttgart | ||

12:15 25mExperience report | Towards Situation-Aware Meta-Optimization of Adaptation Planning Strategies Main Track Veronika Lesch , Tanja Noack University of Hohenheim, Germany, Johannes Hefter University of Würzburg, Germany, Samuel Kounev University of Würzburg, Germany, Christian Krupitzer University of Hohenheim, Germany | ||

11:45 - 12:40 | Languages, formal methods, and assurances for Autonomic and Self-Organizing SystemsMain Track at AUDITORIUM 2 Chair(s): Roberto Casadei University of Bologna, Italy | ||

11:45 25mPaper | Runtime Equilibrium Verification for Resilient Cyber-Physical Systems Main Track Matteo Camilli Free University of Bozen-Bolzano, Raffaela Mirandola Politecnico di Milano, Patrizia Scandurra University of Bergamo, Italy | ||

12:10 15mShort-paper | A Programming Language for Sound Self-Adaptive Systems Main Track Media Attached | ||

12:25 15mVision and Emerging Results | Towards Mapping Control Theory and Software Engineering Properties using Specification Patterns Main Track Ricardo Caldas Chalmers, Razan Ghzouli Chalmers University of Technology & University of Gothenburg, Alessandro Vittorio Papadopoulos Mälardalen University, Patrizio Pelliccione Gran Sasso Science Institute (GSSI) and Chalmers | University of Gothenburg, Danny Weyns KU Leuven, Thorsten Berger Chalmers | University of Gothenburg Pre-print | ||

14:30 - 15:00 | |||

14:30 15m | Awards Main Track | ||

14:45 15m | Closing Statement / ACSOS 2022 Main Track | ||

Accepted Papers

Camera Ready Submission

Camera Ready Submission for Main Proceedings

STEP 1: Important Dates

- At least one author per paper must early pay the registration fee by August 13, 2021.

- Failure to register will result in your paper not being included in the proceedings.

- Final camera-ready manuscripts must be submitted by August 20, 2021.

STEP 2: Page Limits

Your final paper must follow the page limits listed in the following table:

|

Paper Type |

Page Limit (including References) |

Extra Pages Allowed |

|

Regular Research Papers |

10 |

2 |

|

Short Research Papers |

6 |

1 |

|

Experience Reports |

10 |

2 |

“Regular Research Papers”, “Short Research Papers”, and “Experience Reports” are allowed to include up to 2, 1, and 2 extra pages respectively at an additional charge of $100 (100 US Dollars) per page. Extra pages should be purchased at registration time.

STEP 3: Formatting Your Paper

- Submitted abstracts should not exceed 200 words.

- Final submissions to ACSOS 2021 must be formatted in US-LETTER page size, must use the two-column IEEE conference proceedings format, and must be prepared in PDF format. Microsoft Word and LaTeX templates are available at the IEEE “Author Submission Site” HERE. The templates are available on the left-hand-side tab “Formatting Your Paper”.

- Please, DO NOT include headers/footers or page numbers in the final submission.

STEP 4: Submitting Your Final Version

- Once the format of your paper has been verified and validated, you may submit your final version.

- All papers should be submitted using the submission system provided by IEEE “Author Submission Site” HERE.

- After you login to the IEEE “Author Submission Site”, please, follow the instructions as you click the “Next” button on the top right corner of the site. Please, enter the following information exactly as appeared on your paper: paper ID, names of authors, affiliations, countries, E-mail addresses, titles, and abstracts.

- To submit your final manuscript click HERE.

STEP 5: Submitting a Signed Copyright Release Form

- ACSOS 2021 requires users to submit a fully digital version of the electronic IEEE Copyright-release Form (eCF). eCF is provided at the IEEE “Author Submission Site”.

- Follow the instructions in the IEEE “Author Submission Site” to properly fill-out, and submit the IEEE Copyright-release Form (eCF), including:

1) Paper's full title

2) All authors names

3) Conference title: 2021 IEEE International Conference on Autonomic Computing and Self-Organizing Systems (ACSOS 2021)

4) Signature (on appropriate line)

- The signed IEEE Copyright-release Form (eCF) should be submitted together with your camera-ready manuscripts on August 20, 2021.

If you have any questions about the above procedures, please contact the Publications Chair Esam El-Araby: esam@ku.edu.

Note: Please complete each of the above steps - the conference organizers will not be responsible if your paper is omitted from the proceedings, is not available online on IEEE Xplore, or is subject to additional processing costs, if these steps are not performed.

Call for Papers

Important Dates

Abstract submission deadline: April 30th, 2021

Paper submission deadline: May 7th, 2021

Notification to authors: July 2nd, 2021

Camera ready: August 13th, 2021

ACSOS Conference: September 27th October 1st, 2021

All times in Anywhere on Earth (AoE) timezone.

Scope

We invite novel contributions related to the fundamental understanding of autonomic computing, self-adaption and self-organization along with principles and practices of their engineering and application. The topics of interest include, but are not limited to:

- Autonomic and Self-* system properties: robustnetss; resilience; efficient resource management; stability; anti-fragility; diversity; self-reference and reflection; emergent behavior; computational awareness and self-awareness;

- Autonomic and Self-* systems theory: bio-inspiredwork and socially-inspired paradigms and heuristics; theoretical frameworks and models; languages and formal methods; queuing and control theory; requirement and goal expression techniques; uncertainty as a first class entity;

- Autonomic and Self-* systems engineering: reusable mechanisms and algorithms; design patterns; programming languages; architectures; operating systems and middlewares; testing and validation methodologies; runtime models; techniques for assurance; platforms and toolkits; multi-agent systems;

- Autonomic and Self-* systems practice: case studies from industry, experimental setups and data sets, experience reports with established autonomic and self-* software;

- Data-driven management: data mining; machine learning; data science and other statistical techniques to analyze, understand, and manage the behavior of complex systems or establishing self-awareness;

- Mechanisms and principles for self-organisation and self-adaptation: inter-operation of self-* mechanisms; evolution, logic, and learning; addressing large-scale and decentralized system;

- Socio-technical self-* systems: human and social factors; visualization; crowdsourcing and collective awareness;

- Autonomic and self-* concepts applied to hardware systems: self-* materials; self-construction; reconfigurable hardware, self-* properties for quantum computing;

- Convergence of artificial intelligence, cloud, and Internet of Things: moving artificial intelligence to the edge, collective decision processes, in-network learning, distributed reinforcement learning;

- Self-adaptive cybersecurity: intrusion detection, malware attribution, zero-trust networks and blockchain-based approaches, privacy in self-* systems;

- Cross disciplinary research: approaches that draw inspiration from complex systems, artificial intelligence, physics, chemistry, psychology, sociology, biology, and ethology.

We invite research papers applying autonomic and self-* approaches to a wide range of application areas, including (but not limited to):

- smart environments: -grids, -cities, -homes, and -manufacturing;

- Internet of things and cyber-physical systems;

- robotics, autonomous vehicles, and traffic management;

- cloud (including serverless), fog, edge computing and data centers;

- hypervisors, containerization services, orchestration, operating systems, and middleware;

- biological and bio-inspired systems.

Note that separate calls for Poster, Demo, and In-Practice Report Submissions will also be issued, as well as a call for participation in the Doctoral Symposium.

Submission Instructions

Research papers (up to 10 pages including images, tables, and references) should present novel ideas in the cross-disciplinary research context described in this call, motivated by problems from current practice or applied research.

Experience Reports (up to 10 pages including images, tables, and references) cover innovative implementations, novel applications, interesting performance results and experience in applying recent research advance to practical situations on any topics of interest.

Vision Papers (up to 6 pages including images, tables, and references) introduce ground-shaking, provocative, and even controversial ideas; discuss long term perspectives and challenges; focus on overlooked or underrepresented areas, and foster debate.

All submissions must indicate a primary and (optionally) a secondary topic area from the following list:

- RM: Resource Manargement in Data Centers and Cloud Computing

- CPS: Cyber-Physical Systems (CPS) and Internet of Things (IoT)

- SOSA: Theory and Practice of Self-Organization, Self-Adaptation, and Organic Computing

- ENG: Software and Systems Engineering for Autonomic and Self-Organizing Systems

- SYS: Systems theory for Autonomic and Self-Organizing Systems

- DATA: Data-Driven Approaches to Autonomic and Self-Organizing Systems Analysis and Management

- NEW: Emerging Computing Paradigms

- SOC: Socio-technical Autonomic and Self-Organizing Systems

- LANG: Languages and Formal Methods for Autonomic and Self-Organizing Systems

- COG: Self-Aware, Reflective, and Cognitive Computing

- APP: Application Areas for Autonomic and Self-Organizing Systems such as Autonomous Vehicles, Smart Cities, Swarms, etc.

- REL: Assurances, security, resilience, and reliability of Autonomic and Self-Organizing Systems

- CROSS: Cross-disciplinary research on e.g., complex systems, control theory, artificial intelligence, chemistry, psychology, sociology, and biology

All submissions are required to be formatted according to the standard IEEE Computer Society Press proceedings style guide. Papers are submitted electronically in PDF format through the ACSOS 2021 conference management system: https://easychair.org/conferences/?conf=acsos2021

Research papers and experience reports will be included in the conference proceedings that will be published by IEEE Computer Society Press and made available as a part of the IEEE Digital Library. Vision papers will be part of a separate proceedings volume (the ACSOS Companion).

As per the standard IEEE policies, all submissions should be original, i.e., they should not have been previously published in any conference proceedings, book, or journal and should not currently be under review for another archival conference. We would like to also highlight IEEE’s policies regarding plagiarism and self-plagiarism: https://www.ieee.org/publications/rights/plagiarism/id-plagiarism.html. Where relevant and appropriate, accepted papers will also be encouraged to participate in the Demo or Poster Sessions.

Review Criteria

Research papers should highlight both theoretical and empirical contributions, substantiated by formal analysis, simulation, experimental evaluations, or comparative studies. Appropriate references must be made to related work. Due to the cross-disciplinary nature of the ACSOS conference, we encourage papers to be intelligible and relevant to researchers who are not members of the same specialized sub-field. Moreover, research papers should provide an indication of the real-world relevance of the problem that is solved, including a description of the domain, and an evaluation of performance, usability, and/or comparison to alternative approaches.

Experience reports should provide insights into any aspect of design, implementation or management of self-* systems that would be of benefit to practitioners and the ACSOS community.

Vision Papers should introduce innovative, risky, visionary, and provocative ideas, spotlighting overlooked areas, raising controversial points, and exploring cross-disciplinary contaminations.

All submissions will be subject to a rigorous single-blind peer-review and evaluated based on the quality of their technical contribution, originality, soundness, significance, presentation, understanding of the state of the art, and overall quality.

We intend to continue the tradition of giving the best papers of the conference an opportunity to publish an extended version in a special issue of ACM Transactions on Autonomous and Adaptive Systems (TAAS). The Karsten Schwan Best Paper Award will be awarded to a selected paper.

NEW! — The ACSOS Artifact Evaluation program

For the first time, ACSOS introduces an artifact evaluation program. To improve reproducibility of results, authors of accepted papers with a computational component are invited to submit their code and/or their data to an optional artifact evaluation process. A dedicated committee will be in charge of reproducing the results presented in the accepted papers. Participation in the program is optional and does not impact the paper acceptance in any way. The best artifacts will be awarded the IEEE ACSOS Best Software Artifact Award. Artifacts that are successfully reproduced will be highlighted in the conference program, and they will feature in the corresponding camera ready paper a badge of reproducibility.

For more information, please refer to the ACSOS Artifact Evaluation program page.

Posters and Demos

Posters provide a forum for authors to present their work in an informal and interactive setting. They allow authors and interested participants to engage in discussions about their work. Demonstration should present an existing tool or research prototype. Authors are expected to perform a live demonstration on their own hardware during the poster and demonstration session. Contributions will be collected in ACSOS Companion Proceedings, published by IEEE Computer Society Press, and made available as a part of the IEEE Digital Library.

For more information, please refer to the ACSOS 2021 call for posters and demos.

Workshops and Tutorials

ACSOS workshops will provide a meeting place for presenting novel ideas in a less formal and possibly more focused way than the conferences themselves. Their aim is to stimulate and facilitate active exchange, interaction, and comparison of approaches, methods, and ideas. Contributions will be collected in ACSOS Companion Proceedings, published by IEEE Computer Society Press, and made available as a part of the IEEE Digital Library.

For more information, please refer to the ACSOS 2021 call for workshops and tutorials.

Doctoral Symposium

The Doctoral Symposium provides an international forum for PhD students working in ACSOS-related research fields to present their work to a diverse audience of leading experts in the field, to gain both insightful feedback and discussion points around their research as well as the invaluable experience of presenting new research to an international audience. Contributions will be collected in ACSOS Companion Proceedings, published by IEEE Computer Society Press, and made available as a part of the IEEE Digital Library.

For more information, please refer to the ACSOS 2021 Doctoral Symposium call for participation.

ACSOS in practice: Accelerating Convergence between Academia and Industry (ACAI)

ACAI@ACSOS features short vision talks and opportunities for researchers to brainstorm potential collaborations. We ask ALL ACAI attendees to submit information about their interests, qualifications and visions for ACAI. Contributions will be collected in ACSOS Companion Proceedings, published by IEEE Computer Society Press, and made available as a part of the IEEE Digital Library.

For more information, please refer to the ACAI@ACSOS2021 call for papers.

Call for Artifacts

Artifact Evaluation

Artifact Evaluation (AE) for ACSOS is an optional evaluation process for research works that have been accepted for publication at ACSOS. Due to the systems-oriented scope of ACSOS, authors are strongly encouraged to submit artifacts to increase the value and the visibility of their research.

The AE process seeks to further the goal of reproducible science. It offers authors the opportunity to highlight the reproducibility of their results, and to obtain a validation given by the community for the experiments and data reported in their paper. In the AE process, peer practitioners from the community will follow the instructions included in the artifacts and give feedback to the authors, while keeping papers and artifacts confidential and under the control of the authors.

The AE process is non-competitive and success-oriented. The acceptance of the papers has already been decided before the AE process starts and the hope is that all submitted artifacts will pass the evaluation criteria. Authors of artifacts are expected to interact with the evaluators to fix any possible technical issues that may emerge during the review process and to improve the portability of the artifact.

Authors of papers corresponding to artifacts that pass the evaluation will be entitled to include, in the camera-ready version of their paper, an IEEE AE seal that indicates that the artifact has passed the repeatability test. Authors are also entitled to, and indeed encouraged, to also use this IEEE AE seal on the title slide of the corresponding presentation at ACSOS’21.

Artifact Submission

Artifacts should be submitted to https://easychair.org/conferences/?conf=acsos2021

The submission will require the following documents:

- The paper associated with the artifact in a form that is as close as possible to the final camera-ready

- A persistent URL pointing to the artifact (could be protected if not Open-Source)

- A video presenting the artifact (optional)

When submitting an artifact for evaluation, please provide a document (e.g., a PDF, HTML, or text file) with instructions on:

- The system requirements

- how to use the packaged artifact (please reference specific figures and tables in the paper that will be reproduced), and how to setup the artifact on a machine different from the provided packaged artifact;

- to reproduce the results in the paper. The document should include a link to the packaged artifact (e.g., a virtual machine image) and a description of key configuration parameters for the packaged artifact (including hardware characteristics needed such as type of architecture, RAM, number of cores, etc.).

Please provide precise instructions on how to proceed after booting the image, including the instructions for running the artifact. Authors are strongly encouraged to prepare readable scripts to launch the experiments automatically. In the case the experimentations require a long time to complete, the authors may prepare simplified experiments (e.g., by reducing the number of samples over which the results are averaged) with shorter running times that demonstrate the same trends as observed in the complete experiments (and as reported in the paper).

Finally, be sure to include a version of the accepted paper related to the artifact that is as close as possible to the final camera-ready version.

A good “how-to” guide for preparing an effective artifact evaluation package is available online at http://bit.ly/HOWTO-AEC.

Important Dates

Artifact submission deadline: Jul 9, 2021

Artifact notification: Aug 6, 2021

The evaluators may give early feedback if there are any issues with the artifacts that prevent them from being run correctly. Authors will be able to interact with evaluators to enhance their artifacts, while preserving the anonymous review process.

An image with an AE stamp will be provided to be included in the camera-ready paper.

Special Artifacts

If you are not in a position to prepare the artifact as above, or if your artifact requires special libraries, commercial tools (e.g., MATLAB or specific toolboxes), or particular hardware, please contact the AE co-chairs as soon as possible: artifacts@acsos.org.

Evaluation Criteria

The artifact evaluation criteria are similar to those previously used by other conferences in their repeatability and AE processes. Submissions will be judged based on three criteria, namely coverage, instructions, and quality, (see more details below), where each criterion is assessed on the following five-point scale:

- significantly exceeds expectations (5),

- exceeds expectations (4),

- meets expectations (3),

- falls below expectations (2),

- missing or significantly falls below expectations (1).

In order to be judged ‘repeatable’ an artifact must generally ‘meet expectations’ (average score of 3 or more), and must not have any missing elements (no scores of 1). Each artifact is evaluated independently according to the listed objective criteria. The higher scores (‘exceeds’ or ‘significantly exceeds expectations’) in the criteria will be considered aspirational goals, not requirements for acceptance.

Artifact Packaging Recommendations

Based on previous experience, the biggest hurdle to successful reproducibility is the setup and installation of the necessary libraries and dependencies. Authors are therefore encouraged to prepare a virtual machine (VM) image including their artifact (if possible), or a dockerized version of it, and to make it available via HTTP throughout the evaluation process (and, ideally, afterwards). As the basis of an VM image, please choose commonly-used OS versions that have been tested with the virtual machine software and that evaluators are likely to be accustomed to. For this, we encourage authors to use VirtualBox (https://www.virtualbox.org) and save the VM image as an Open Virtual Appliance (OVA) file. To facilitate the preparation of the VM, we suggest using the VM images available at https://www.osboxes.org/. We invite authors to check out Zenodo (https://zenodo.org) for citable artifact sharing and archiving and/or CodeOcean Capsules (https://codeocean.com/) that also allow for remote execution of various languages including Mathlab and R. This is especially suitable in cases when no special hardware or measurement setup is required.

Criterion 1: Coverage for repeatability

The focus is on figures or tables in the paper containing computationally generated or processed experimental evidence used to support the claims of the paper. Other figures and tables, such as illustrations or tables listing only parameter values, are not considered in this calculation.

Note that satisfying this criterion does not require that the corresponding figures or tables be recreated in exactly the same format as appears in the paper, merely that the data underlying those figures or tables be generated faithfully in a recognizable format.

A repeatable element is one for which the computation can be rerun by following the instructions provided with the artifact in a suitably equipped environment.

Criterion 2: Instruction

Instructions are intended for other practitioners to be able to recreate the paper’s computationally generated results. The categories for this criterion are:

- None (missing / 1): No instructions were included in the artifact.

- Rudimentary (falls below expectations / 2): The instructions specify a script or command to run, but little else.

- Complete (meets expectations / 3): For every computational element that is repeatable, there is a specific instruction which explains how to repeat it. The environment under which the software was originally run is described.

- Comprehensive (exceeds expectations / 4): For every computational element that is repeatable there is a single command or clearly defined short series of steps which recreates that element almost exactly as it appears in the published paper (e.g.: file format, fonts, line styles, etc. might not be the same, but the content of the element is the same). In addition to identifying the specific environment under which the software was originally run, a broader class of environments is identified under which it could run.

- Outstanding (significantly exceeds expectations / 5): In addition to the criteria for a comprehensive set of instructions, explanations are provided of:

- all the major components / modules in the software,

- important design decisions made during implementation,

- how to modify / extend the software, and/or

- what environments / modifications would break the software.

Criterion 3: Quality

This criterion explores the means provided to infer, show, or prove trustworthiness of the software and its results. While a set of scripts which exactly recreate, for example, the figures from the paper certainly aid in repeatability, without well-documented code it is hard to understand how the data in that figure was processed, without well-documented data it is hard to determine whether the input is correct, and without testing it is hard to determine whether the results can be trusted.

If there are tests in the artifact which are not included in the paper, they should at least be mentioned in the instructions document. Documentation of test details can be put into the instructions document or into a separate document in the artifact.

The categories for this criterion are:

- None (missing / 1): There is no evidence of software documentation or testing.

- Rudimentary documentation (falls below expectations / 2): The purpose of almost all files is documented (preferably within the file, but otherwise in the instructions or a separate README file).

- Comprehensive documentation (meets expectations / 3): The purpose of almost all files is documented. Within source code files, almost all classes, methods, attributes and variables are given lengthy clear names and/or documentation of their purpose. Within data files, the format and structure of the data is documented; for example, in comma separated value (CSV) files there is a header row and/or comments explaining the contents of each column.

- Comprehensive documentation and rudimentary testing (exceeds expectations / 4): In addition to the criteria for comprehensive documentation, there are identified test cases with known solutions which can be run to validate at least some components of the code.

- Comprehensive documentation and testing (significantly exceeds expectations / 5): In addition to the criteria for comprehensive documentation, there are clearly identified unit tests (preferably run within a unit test framework) which exercise a significant fraction of the smaller components of the code (individual functions and classes) and system-level tests which exercise a significant fraction of the full package. Unit tests are typically self-documenting, but the system level tests will require documentation of at least the source of the known solution.

Further Questions

In case of any questions or concerns, or for advice on how to best package and submit complex artifacts, please contact the Artifact Evaluation Co-Chairs.

Chairs

Nikolas Herbst, U. Würzburg, Germany

Alessandro V. Papadopoulos, Malardalen U., Sweden

Contact: artifacts@acsos.org